Video Concept Detection

Overview

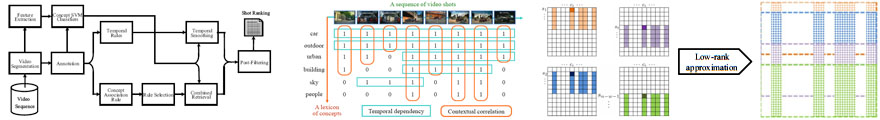

The broad availability of videos has led to a general and strong demand for effective and efficient video retrieval. Concept-based video retrieval is a promising approach. However, its success greatly depends on the accuracy of concept detection. We proposed several frameworks to take advantage of both contextual correlation and temporal dependency to improve accuracy for video concept detection from user-provided annotations and/or detector-generated predictions.

- Learning from annotations (supervised).

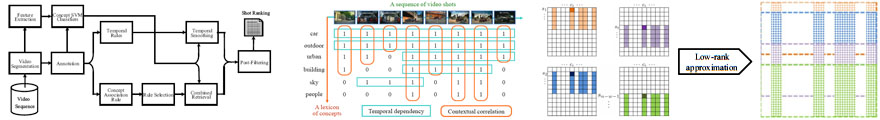

In our IEEE TMM 2008 paper, we proposeid methods to learn association rules for inter-concept relations and temporal regression filters for inter-shot correlations.

In ACM MM 2008, we proposed the multi-cue fusion (MCF) method, a data-driven approach to implicitly learn semantic and temporal relations from annotations for each concept. Experiments on the TRECVID 2006 data set show that our framework is promising, achieving around a 30% performance boost on popular baselines in inferred average precision.

- Learning from annotations and predictions (semi-supervised, cross-domain).

When the video domain changes between training and test data sets, MCF is susceptible to the overfitting problem due to the differences between the labeled and unlabeled data in contextual and temporal relationships.

We proposed a cross-domain MCF (CDMCF) method to assign unseen shots pseudo-labels based on the initial detection scores and learn significant patterns from pseudo-labels to address the domain change problem.

To reduce the risk of learning unreliable relationships from noisy pseudo-labels, CDMCF regularizes with the relationships learned from training data, which may come from different domains.

Performance gains range from 27% to 61% for different settings. The results have been published by IEEE Transactions on Pattern Analysis and Machine Intelligence (PAMI).

- Learning from detector-generated predictions (unsupervised).

Previous methods rely on expensive user annotations to acquire reliable knowledge.

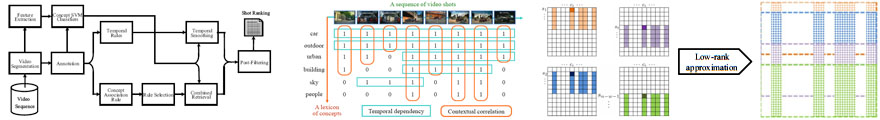

We further proposed an unsupervised method to refine the inaccurate scores generated by concept classifiers by taking into account the concept-to-concept correlation and shot-to-shot similarity embedded within the score matrix via matrix factorization.

Experiments on the TRECVID 2006-2008 datasets demonstrate relative performance gains ranging from 13% to 52% without using any user annotations or external knowledge resources.

The paper has been published by ACM TOMCCAP.

Publications

- Cross-domain

Multi-cue Fusion for Concept-based Video Indexing

-

Ming-Fang Weng,

Yung-Yu Chuang

- IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), October 2012

- Collaborative

Video Re-indexing via Matrix Factorization

-

Ming-Fang Weng,

Yung-Yu Chuang

- ACM Transactions on Multimedia Computing, Communications and Applications (TOMCCAP), May 2012

- Multi-Cue Fusion

for Semantic Video Indexing

-

Ming-Fang Weng,

Yung-Yu Chuang

- ACM Multimedia 2008

- Association and Temporal

Rule Mining for Post-Processing of Semantic Concept Detection in Video

-

Ken-Hao Liu,

Ming-Fang Weng,

Chi-Tao Tseng,

Yung-Yu Chuang,

Ming-Syan Chen

- IEEE Transactions on Multimedia, Feburary 2008

Support

This research is supported by:

- NSC100-2628-E-002-009

- NSC100-2622-E-002-016-CC2

- NSC99-2628-E-002-015

- NSC99-2811-E-002-096

- NSC96-2622-E-002-002

- NSC95-2622-E-002-018

cyy -a-t- csie.ntu.edu.tw